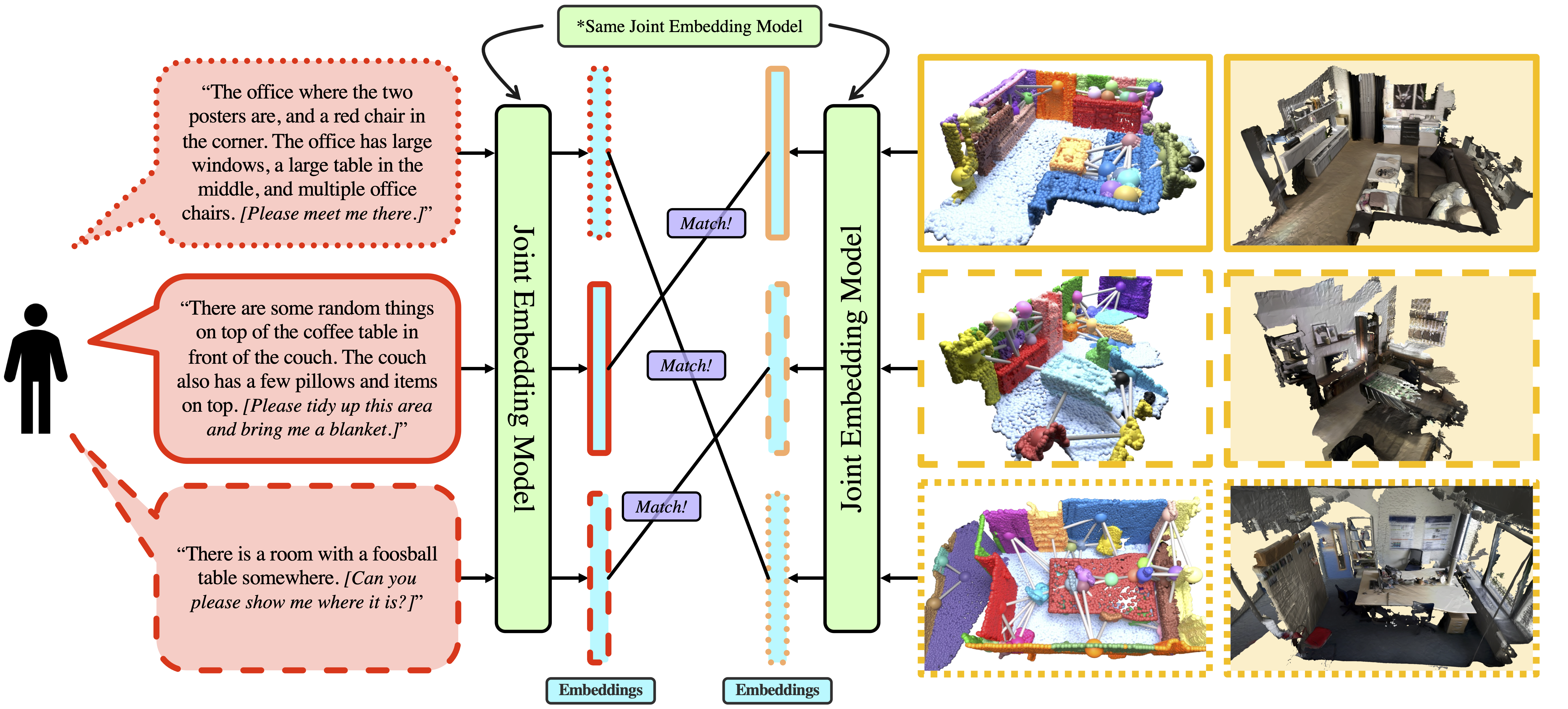

Natural language interfaces for embodied AI are becoming more ubiquitous in our daily lives. This opens up further opportunities for language-based interaction, such as a user verbally instructing an agent to execute some task in a specific location. For example, "put the bowls back in the cupboard next to the fridge" or "meet me at the intersection under the red sign." As such, we need methods that interface between natural language and map representations of the environment. To this end, we explore the question of whether we can use an open-set natural language query to identify a scene represented by a 3D scene graph. We define this task as "language-based scene-retrieval" and it is closely related to "coarse-localization," but we are instead searching for a match from a collection of disjoint scenes and not necessarily a large-scale continuous map. We present Text2SceneGraphMatcher, a "scene-retrieval" pipeline that learns joint embeddings between text descriptions and scene graphs to determine if they are a match.

Given open-set natural language text descriptions of scenes, our method showws the potential for using language and scene graphs for performing scene retrieval. We evaluate our method on scenes from the 3DSSG dataset, and also a set of human annotated scenes. We show generalizability to open-set human annotated language queries. Our joint embedding model also works in a “retrieval-based” fashion, meaning we can embed our scene graphs in a first step and then retrieve the most similar embedding text and scene graph pair.